This is one of the most common reasons machine learning systems degrade in production. A model can look excellent during validation and still fail quietly months later because the input data no longer looks like the training data. That is why data drift detection is now a core part of MLOps and AI governance, not just a nice add-on for mature teams.

Cloud providers and standards bodies now treat monitoring as an operational requirement. NIST’s AI Risk Management Framework page highlights the 2024 Generative AI Profile, which reinforces lifecycle risk management practices relevant to monitoring and control.

What Data Drift Means

Basic Idea

Data drift happens when the statistical properties of input data change over time. Evidently AI defines data drift as a shift in the distributions of ML model input features and notes that this can reduce model performance when production data no longer matches what the model was trained to handle.

A simple example is a retail demand model trained mostly on in-store purchases. If a mobile app campaign suddenly increases online ordering, the input distribution changes. The model may still run normally, but predictions become less reliable because customer behavior has shifted.

Data Drift vs Other Drift Types

Teams often confuse data drift with related problems. The distinction matters because the response strategy changes.

- Data drift means input data distributions change.

- Concept drift means the relationship between inputs and outcomes changes.

- Prediction drift means the distribution of model outputs changes.

- Training-serving skew means production data differs from training data, often because of pipeline or feature mismatches.

Evidently’s documentation explains these distinctions clearly, including that training-serving skew is often visible soon after deployment while data drift is usually a gradual production-time change.

Why Data Drift Detection Matters

Silent Performance Loss

One of the biggest risks is that drift can degrade model quality before anyone notices. In many use cases, ground truth labels arrive late, or not at all. Fraud labels may take days or weeks. Credit outcomes may take months. During that gap, drift detection acts as an early warning signal.

Business and Compliance Impact

Data drift affects more than technical accuracy. It can increase false positives in fraud systems, reduce recommendation relevance, distort pricing, or create unfair outcomes in screening tools. In regulated industries, this can become a governance and audit issue, not just a model tuning problem.

Operational Stability

Drift detection helps teams decide when to retrain, recalibrate, or investigate upstream data issues. Without it, retraining becomes guesswork, and many teams either retrain too often or far too late. Both are expensive. Naturally, organizations prefer discovering failures after a customer escalation, because tradition.

Common Detection Methods

Statistical Distribution Tests

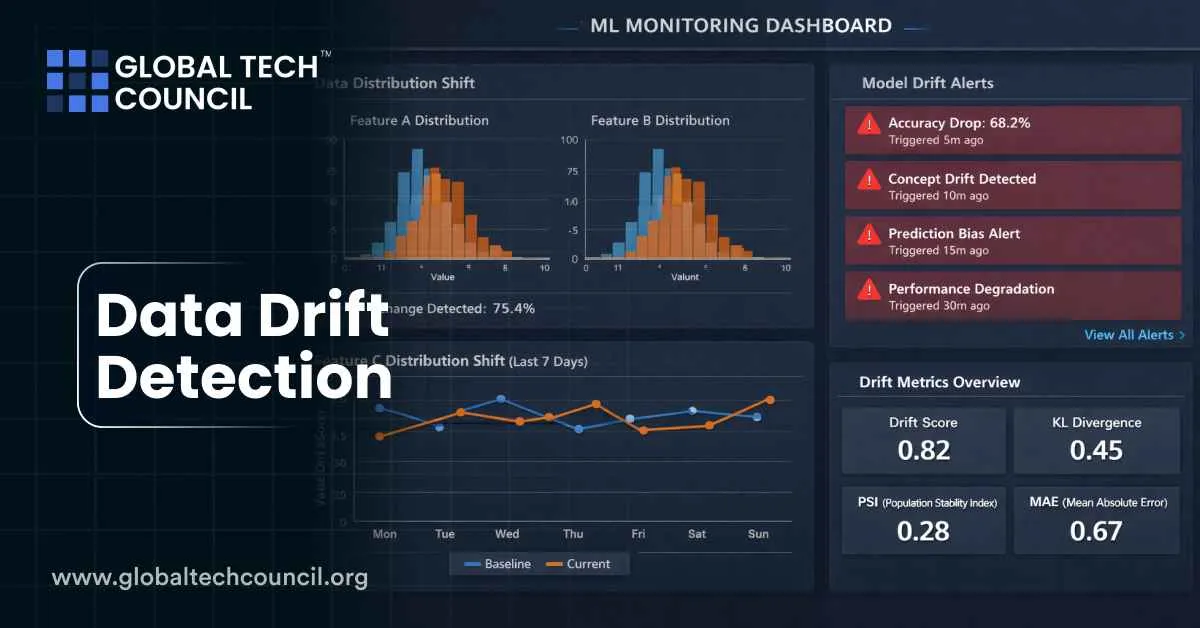

The most common approach compares baseline data (usually training or recent stable production data) with current production data. Teams use statistical measures to quantify how much a feature distribution has changed.

AWS Well-Architected guidance for evaluating data drift specifically points to metrics such as KL divergence, Jensen-Shannon divergence, and Population Stability Index (PSI) for comparing distributions. It also recommends establishing thresholds and alerting mechanisms.

These methods are useful for numerical and categorical features, especially when combined with per-feature thresholds and severity scoring.

Segment-Based Drift Checks

Global drift scores can hide problems. A feature may look stable overall but drift heavily in an important customer segment, location, device type, or time window. Strong drift programs therefore monitor slices, not just full-population distributions.

For example, a fraud model may look stable globally while drifting sharply in mobile-app transactions from one region after a payment workflow change.

Prediction and Output Monitoring

When labels are delayed, teams often monitor prediction drift as a proxy. If output probabilities suddenly shift, that can indicate an environmental change, data pipeline issue, or model failure. Evidently notes that prediction drift can be a practical signal when direct performance measurement is not yet available.

Data Quality Plus Drift

Many production failures look like drift but are actually data quality issues, such as missing fields, changed formats, or broken feature engineering. Good monitoring separates these but tracks both together. A schema change can break a model faster than a slow statistical drift.

Real-World Examples

Fraud Detection

Fraud patterns evolve constantly. Attackers change devices, payment flows, IP behavior, and transaction timing. A fraud model trained on last quarter’s behavior can degrade quickly if a new scam pattern emerges. Drift detection helps teams flag unusual feature shifts and update rules or retrain models before losses rise.

Retail Forecasting

Promotions, seasonality, app launches, and supply disruptions can all change customer behavior. A demand forecasting model may still produce predictions, but the inputs can shift enough to reduce accuracy. Drift detection helps identify when the system is no longer operating under conditions similar to training.

Healthcare and Insurance

Healthcare and insurance models face changes in patient mix, coding practices, provider workflows, and claims patterns. Drift detection is especially important here because model errors can affect service quality, risk scoring, and operational decisions. Teams often combine drift alerts with human review to reduce harm.

How Teams Implement Drift Monitoring

Build a Baseline

The first step is to define a baseline dataset that represents expected behavior. AWS guidance recommends using training data or a representative subset that performed well in validation as the reference for future comparisons.

A poor baseline creates noisy alerts, so this step matters more than people want to admit.

Set Metrics and Thresholds

Choose drift metrics that match the feature type and business risk. Then define thresholds that trigger alerts without flooding the team. AWS also recommends balancing sensitivity so teams catch meaningful changes while avoiding false alarms from minor variation.

Monitor on a Schedule

Drift checks can run hourly, daily, or weekly depending on the model’s importance and traffic volume. For high-risk models, more frequent monitoring is usually justified.

Amazon SageMaker Model Monitor supports scheduled monitoring and alerts for production ML systems, including drift detection workflows.

Compare Training-Serving Skew and Ongoing Drift

Google Cloud Vertex AI Model Monitoring distinguishes between training-serving skew and inference drift. Its documentation notes that skew compares production data to training data, while drift tracks changes in production data over time, and that both can be enabled for categorical and numerical features.

This distinction is useful because a model can have no immediate skew at launch and still drift later as the environment changes.

Recent Developments

Stronger Platform Support

A major recent trend is that drift detection is now built into mainstream ML platforms rather than handled only through custom scripts. Google Vertex AI and Amazon SageMaker both provide managed monitoring features for drift and related production quality checks.

This lowers the barrier for teams to implement monitoring, though it does not remove the need for thoughtful thresholds and business context.

Expanded Monitoring Scope

Monitoring is also expanding beyond basic feature drift. AWS documentation highlights related production checks such as bias drift and feature attribution drift in the broader SageMaker monitoring ecosystem. This reflects a broader shift toward continuous model governance, where teams watch not only data changes but also fairness and explainability signals over time.

Better MLOps Governance Alignment

NIST’s AI risk management guidance and the GenAI profile reinforce the idea that AI risk controls should span the full lifecycle, which supports treating drift detection as a governance practice, not just a model-ops convenience.

Best Practices

Tie Alerts to Actions

A drift alert is only useful if it triggers a clear response. Define who investigates, what gets checked first, and when retraining is warranted.

Monitor Features That Matter Most

Not every feature deserves the same alert sensitivity. Prioritize features with high model influence and business impact.

Use Human Review for High-Risk Cases

For critical systems, combine automated drift detection with human oversight. Automated alerts are fast. Humans are better at understanding whether a shift is harmful, expected, or caused by a temporary event.

Skills and Certifications

Professionals working on model monitoring and MLOps benefit from both technical and communication skills. A Tech certification can support broader knowledge in systems, analytics, and modern deployment practices. A Machine Learning certification can help build understanding of ML workflows, model behavior, and responsible deployment. A marketing certification and Deep Tech Certification is also useful when teams need to explain model performance changes, risk controls, and operational decisions to non-technical stakeholders.

Conclusion

Data drift detection is essential for keeping machine learning systems reliable after deployment. Models do not fail only because the algorithm is bad. They often fail because the world changes and nobody is watching the inputs closely enough.

Organizations that treat drift detection as a continuous operational discipline, with baselines, thresholds, monitoring schedules, and response playbooks, are better positioned to maintain model quality and trust. The model may be smart, but production reality is usually smarter.